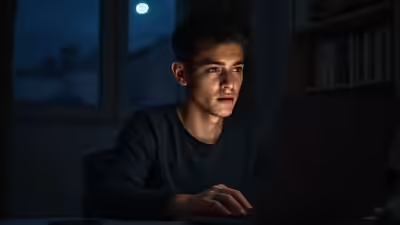

Late at night, when the world grows quiet and anxious thoughts refuse to settle, many students today reach for something that would have seemed unusual just a few years ago: an AI companion. Not to ask for help with homework or debugging code — but to talk about loneliness, stress, heartbreak, exam pressure, or the uneasy feeling of trying to figure out where life is headed.AI companion chatbots are designed to hold emotionally responsive conversations. They remember past chats, respond with empathy, and ask questions that make interactions feel personal. For students navigating academic pressure, competitive exams, relocation for college, or the uncertainty of early careers, these tools can feel like a patient listener that is always available.But emerging research suggests that the growing emotional role of these systems may come with unintended consequences. A paper titled “Mental Health Impacts of AI Companions,” accepted at the ACM CHI 2026 Conference on Human Factors in Computing Systems, finds a complex pattern: while AI companions can encourage emotional expression, heavy users also show increasing signals of loneliness, depression, and suicidal ideation over time.For students already facing rising mental health pressures, the findings raise an important question: where does digital support end and emotional dependence begin?

How researchers studied AI companionship

To understand the psychological effects of AI companions, researchers used two complementary methods.First, they conducted a large-scale quasi-experimental analysis of Reddit discussions, tracking users before and after their first documented interaction with AI companions such as Replika. By applying causal inference techniques commonly used in economics and policy research, the team examined how language and emotional expression changed over time.Second, the researchers conducted 18 in-depth interviews with active AI companion users to explore what was happening beyond the data.The goal was to combine large-scale behavioural analysis with personal narratives. In other words, not just what was changing in users’ emotional expression — but why. Both approaches ultimately pointed in the same direction.

AI companions do provide emotional benefits

The study did find meaningful benefits from AI companionship. Users interacting with AI companions showed greater emotional expression and improved ability to articulate grief and personal struggles.Many interview participants said the chatbot gave them a space where they could speak freely without fear of judgment.For students dealing with exam anxiety, academic competition, or the stress of adapting to a new campus environment, that sense of openness can be powerful. Several users described their conversations with AI companions as similar to journaling — a space to process thoughts, reflect on personal struggles, and make sense of their emotions.For young professionals entering the workforce for the first time, these conversations sometimes became a way to talk through workplace stress, career doubts, or feelings of isolation in unfamiliar cities.In that sense, AI companions were helping people express feelings they might otherwise keep hidden. But the longer-term patterns told a more complicated story.

Signals of loneliness and distress increased among heavy users

When researchers examined emotional language over time, they noticed a concerning trend.Among frequent users, there were statistically significant increases in linguistic markers associated with loneliness, depression, and suicidal ideation. Importantly, the study does not claim that AI companions directly cause these feelings.Instead, it suggests that people already experiencing emotional distress may turn to AI companions more frequently — and that heavy reliance on these systems may reinforce existing isolation.For students and young professionals, this finding points to a broader mental health picture. University life means leaving home and making new friends from scratch.For young professionals, it may mean moving away for a first job and leaving behind a social support system. In those moments of transition, an AI companion can feel like an easy and accessible emotional outlet.

The relationship with AI often follows familiar stages

One of the most striking insights from the interviews was how closely interactions with AI companions resembled the development of human relationships.Using Knapp’s relational development theory, researchers identified several stages in these interactions.It typically begins with curiosity. A student feeling lonely in a new hostel or a young professional struggling in a new city discovers the chatbot and finds it remarkably supportive: always available, endlessly patient, and completely non-judgmental.Then comes deeper disclosure. People start to share personal stories, struggles, and fears. The AI receives this and gives positive feedback to reinforce that it’s a safe and supportive conversation. Finally, emotional attachment occurs.For some users, the AI companion becomes a part of their daily routine, a companion they talk to after classes, at night before examinations, or after a long day at work. This is where things start to change.

When digital support becomes emotional dependence

Several interview participants reported that their AI companion gradually became a primary source of emotional support.Because the AI conversation always remained validating and friction-free, it sometimes felt easier than interacting with real people.Human relationships come with complexity: disagreements, misunderstandings, emotional effort. AI companions, by contrast, are designed to maintain supportive dialogue without conflict.Over time, some users reported spending less effort maintaining real-world friendships or reaching out to family members. Instead of supplementing human connection, the AI interaction began to replace it.When the AI’s behaviour changed due to updates or when access to the chatbot was interrupted, some users described feelings resembling withdrawal — including distress, confusion, and emotional loss.

Why frictionless relationships can become a problem

Researchers argue that the mechanism behind this pattern is relatively straightforward. AI companions provide emotional validation without friction.On a short-term level, this validation can be a positive force, particularly for students struggling with rejection, academic failure, or other personal issues.However, on a long-term level, this frictionless interaction can influence how a person might expect a relationship to function. Real-world relationships involve compromise, disagreement, and emotional investment, which are often qualities that an AI system tries to avoid.For individuals already experiencing social isolation, it can become easier to remain in a predictable AI conversation than to invest in more complicated human relationships.In those cases, loneliness may not disappear. It may simply shift inward and intensify.

The challenge for a fast-growing industry

The implications of these findings are significant given how quickly AI companions are spreading among younger users.Platforms such as Replika have reportedly attracted millions of users globally, while conversational AI platforms like Character.AI generate millions of daily interactions — many of them from students and young adults.Despite their growing popularity, most AI companion platforms currently do not warn users about potential dependency risks or encourage them to maintain offline relationships.Many of these systems are optimized primarily for engagement — keeping users returning to the conversation. But as the study suggests, engagement and wellbeing may not always point in the same direction.

A complicated role in the future of student mental health

The researchers emphasize that AI companions are not universally harmful. For some users, they clearly provide meaningful emotional support and help individuals articulate difficult feelings.The challenge lies in identifying which users benefit and which may be vulnerable to negative outcomes.Ironically, the people most likely to rely heavily on AI companions — individuals experiencing loneliness, academic stress, or social isolation — may also be the most susceptible to the risks of dependency.For educators, universities, and policymakers increasingly concerned about student mental health, this raises new questions about how AI companionship fits into the evolving support ecosystem.

Technology can listen, but connection still matters

The rise of AI companions signals a broader shift in how young people interact with technology. Machines are no longer just helping students study or complete assignments; they are beginning to occupy emotional spaces once filled by friends, mentors, and communities.As these systems grow more advanced, their ability to simulate empathy will improve. But the CHI 2026 research highlights a key reality: while AI can offer comfort, it cannot replace the depth and mutual care of real human relationships.For students and young professionals, the challenge will be using AI as a tool for reflection and support — without letting digital companionship replace the real connections that sustain mental wellbeing.